“The bottom line is: we take misinformation seriously.”

Mark Zuckerberg, founder and chief executive of Facebook, wrote this in his blog on November 18. He also outlined ways the company plans to deal with “hoax” news being propagated through its website – news that could have affected the outcome of the US presidential election.

It was a defence against a threat that shows signs of creating fissures in the fabric of the Facebook business, which has seen stellar success over the past 10 years. Integrating the content of others into bespoke news feeds appears not to be as easy as it once seemed.

It was a defence against a threat that shows signs of creating fissures in the fabric of the Facebook business, which has seen stellar success over the past 10 years. Integrating the content of others into bespoke news feeds appears not to be as easy as it once seemed.

One week earlier Mr Zuckerberg took a less proactive position.

“I believe we must be extremely cautious about becoming arbiters of truth ourselves”, he wrote, adding that “of all the content on Facebook, more than 99 per cent of what people see is authentic”.

This refuge in the 99 per cent authenticity of Facebook content was short lived. A day later, a posting by blogger Mike Caulfield, indicated that fake news from clearly bogus sites was propagated through the Facebook news feeds more extensively than news items from trustworthy sources.

In journalism, stories of “man bites dog” naturally are more popular than the reverse. But this morphism considers both to be equally true. Popularity based on outrageous hoax is an altogether more existential threat to Facebook.

The issue gathered momentum three days later in an article published on BuzzFeed News that was further articulated by Vox on the same day.

During the last three months of the US presidential campaign, the 20 best-performing election stories from 19 major news websites generated a total of 7,367,000 shares, reactions, and comments on Facebook.

The 20 top-performing false election stories from hoax sites and hyper-partisan blogs generated more traffic – 8,711,000 shares, reactions, and comments.

Hoax sites surveyed included the Denver Guardian and Ending the Fed, many of which have only been operational for a few months, but which have seen exceptional growth – certainly in comparison with Chief.Exec.com.

A Facebook spokesman told BuzzFeed News that “there is a long tail of stories on Facebook. … It may seem like the top stories get a lot of traction, but they represent a tiny fraction of the total”.

However, what to do about news items such as “Pope Francis Shocks World, Endorses Donald Trump for President” and “WikiLeaks CONFIRMS Hillary Sold Weapons to ISIS”, must be troubling Mr Zuckerberg. What to do about the problematic and huge grey area that separates fact from fiction, and which includes satire and entertainment, must then become equally worrying.

The difficulty arises in reconciling Facebook as a network for individuals and organisations to communicate whatever the veracity of their content, with its alter-ego as a news channel where the relationship between information and truth does matter.

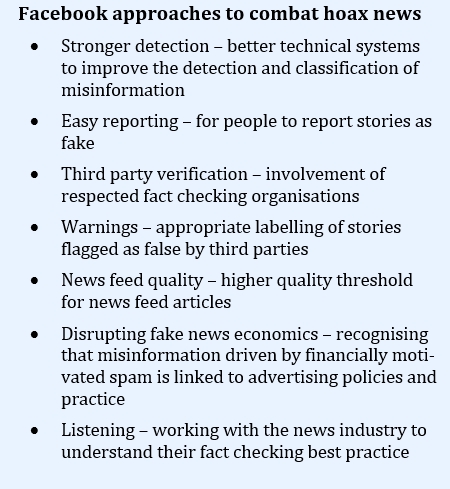

Mark Zuckerberg’s most recent communication pointed to some work-in-progress operational measures (see box), but these do not reveal that this fundamental dichotomy is being addressed. Although he did recognise that “the problems here are complex, both technically and philosophically”.

Internet innovators, entrepreneurs, sociologists and philosophers – this could be your cue

Mr Zuckerberg is not alone in thinking this. The great Scottish philosopher David Hume, in 1748 concluded that human knowledge is ultimately founded only on experience. Even scientists find the truth of their theories is only provisional due to Hume’s problem of induction.

The knowledge theory of Hume could offer Facebook, or perhaps the competition, an escape from a gangrenous future of needing to be the arbiter of truth.

Hume’s problem of induction remained unsolved for two centuries, until 1934 when Karl Popper pointed to a solution. This is based upon recognising an asymmetry in empirical evidence. While no number of positive observations can prove a theory, a single negative finding can disprove one.

A famous example uses the claim that “all swans are white”. Seeing white swans in a village pond tells you nothing at all about the truth of this statement. But observing a single swan that is black denounces this as false.

Black Swan on Herdsman Lake, Western Australia. Credit: David Brown

Moving towards truth is therefore a journey of challenging one’s favourite theories to seek evidence that they are wrong. The harder you try, the closer you will get to the truth, but you will never actually get there.

Karl Popper also identifies three worlds that we as humans inhabit. World 1 is the objective physical world. World 2 is the subjective world of thought that goes on inside our heads. World 3 describes concepts that originate in World 2 and then escape its subjective borders to get outside and be transformed into objective knowledge – books, theories, designs, etc.

Popper proposes that this World 3 transformation depends on the concepts being adopted by people and, in doing so, they become objective entities in our societies. Even 50 years ago he could not have foreseen that such adoption could be facilitated by likes, shares and retweets. Popper would probably have considered the normal flow of information through social networks too ephemeral to be important for the creation of objective knowledge. The tendency for false information to become viral and propagate through these networks would be much more bothersome.

For Popper the creation of objective knowledge is fundamental for the maintenance and defence of an open society. So, Sir Karl Popper and Mark Zuckerberg might have shared a common problem. One could imagine how they might work together on a solution.

“Big data” has a seemingly infinite capacity to supply the empirical evidence to feed Karl Popper’s rationale for knowledge creation. By adding in the wisdom of the crowd, artificial intelligence and the semantic encoding of the epistemology of Popper, Mr Zuckerberg might be able to escape his conundrum and avoid a dystopian Facebook future where the veracity of information is irrelevant.

Internet innovators, entrepreneurs, sociologists and philosophers – this could be your cue.

by John Egan

Conflict of interest statement

Chief-Exec.com makes every attempt to ensure its reported news is valid. News sites designed to attract visitors and gain advertising revenue with sensational fabricated stories poison the news environment as people show mistrust for online content. Chief-Exec.com recognises a conflict of interest in the reporting of this story.